What Happened: Google DeepMind Launches Gemma 4

Google DeepMind has officially released Gemma 4, a new family of open AI models that are both open-weight and open source. According to the Google Developers Blog, Gemma 4 is specifically designed for on-device agentic workflows — meaning these models can carry out multi-step tasks autonomously on mobile phones, desktops, and even Internet of Things (IoT) devices, without constantly sending data back to the cloud.

As Mashable reports, this is a notable shift: Gemma 4 is not merely open-weight (where only model parameters are shared) but fully open source, giving developers and researchers full access to study, modify, and build upon the code. This marks a significant step in Google's open model strategy, which has been building momentum since the original Gemma release.

Key capabilities announced for Gemma 4 include multi-step planning for agentic tasks, support for over 140 languages, and compatibility with LiteRT-LM — Google's framework for running large language models efficiently at the edge. The combination means developers can deploy capable AI assistants that reason through complex tasks directly on a user's device, preserving privacy and reducing latency.

Why It Matters: Agentic AI Moves to the Edge

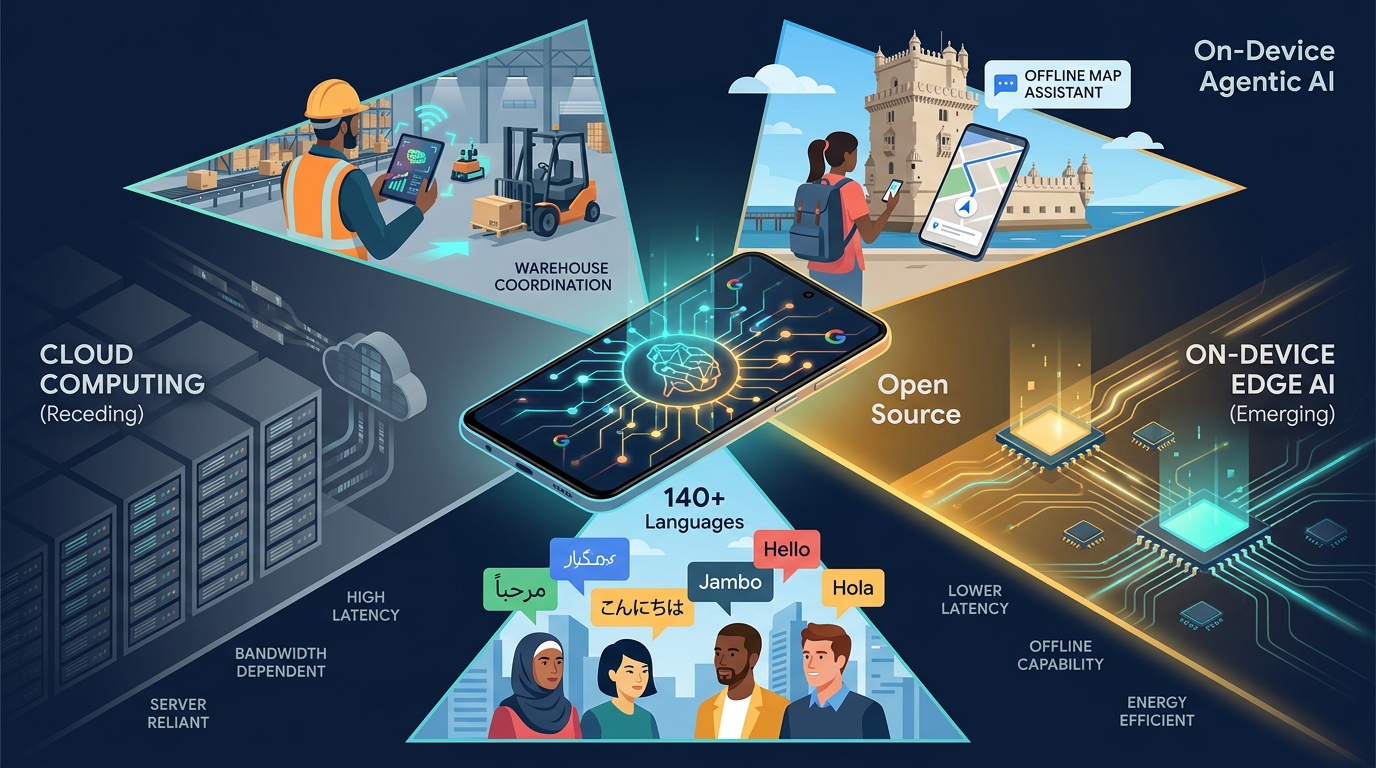

Most powerful AI models today still rely heavily on cloud infrastructure. When you ask a chatbot to help you with a task, your request typically travels to a remote server, gets processed, and the answer comes back over the internet. This works well when you have a fast connection, but it introduces latency, raises privacy concerns, and limits what's possible in offline or bandwidth-constrained environments.

Gemma 4 challenges that model. By bringing agentic capabilities — the ability for an AI to plan, make decisions, and execute multi-step actions — directly onto local hardware, Google is making it possible for developers to build AI features that work even when a device is offline. Consider a warehouse manager using a tablet to coordinate inventory checks: an on-device Gemma 4 model could plan the optimal route through aisles, update stock records, and flag discrepancies, all without needing a server connection.

The 140+ language support is equally significant. Many AI models perform well in English but struggle with less widely spoken languages. Gemma 4's broad language coverage means developers in regions like Southeast Asia, Sub-Saharan Africa, or Eastern Europe can build local-language AI tools without waiting for a major company to prioritise their market. This is a practical win for global accessibility.

The open-source nature of the release also matters for trust and adoption. Developers can inspect the model's architecture, run their own safety evaluations, and fine-tune it for specific use cases — something that is much harder with closed, API-only models.

A Practical Example: Building an On-Device Travel Assistant

To make this concrete, imagine you are a mobile app developer creating a travel assistant that works offline. Here is how Gemma 4 could fit into your workflow:

- Download the model: You obtain the Gemma 4 weights and code from Google's open-source repository (available through channels like Hugging Face and Kaggle, as noted in the Google Developers Blog).

- Deploy with LiteRT-LM: Using Google's LiteRT-LM framework, you package the model so it runs efficiently on Android devices, even mid-range phones with limited RAM.

- Define agentic tasks: You configure the model to handle multi-step travel planning. A user might type: "I'm in Lisbon for two days. Plan a walking itinerary that covers Belém, Alfama, and Baixa, with lunch recommendations near each stop."

- On-device execution: The model reasons through the request locally — determining a logical order of neighbourhoods, estimating walking times, and suggesting restaurants from a cached local database — all without an internet connection.

- Multilingual support: Because Gemma 4 supports 140+ languages, the same assistant can serve travellers in Portuguese, Japanese, Arabic, or Swahili without needing separate models.

This kind of offline-capable, multilingual, agentic assistant simply wasn't feasible with open models a year ago. Gemma 4 makes it a realistic project for a small development team.

What Comes Next

The release of Gemma 4 is likely to accelerate a broader trend: AI capabilities moving away from centralised cloud servers and onto the devices people actually carry and use. For businesses, this means new possibilities for building products that respect user privacy, work in low-connectivity environments, and serve diverse language communities.

Developers can already experiment with Gemma 4 through Google's official channels. As the open-source community begins to fine-tune and extend these models, we can expect specialised variants for healthcare, education, agriculture, and other sectors where on-device AI makes particular sense.

How Brain.mt Can Help

If you're exploring how models like Gemma 4 could fit into your business — whether for on-device applications, multilingual support, or agentic AI workflows — Brain.mt can help you put AI to practical use. Get in touch for a conversation about your specific needs. I also offer dedicated workshops and training sessions on this subject, designed to give your team hands-on experience with the latest open AI models and deployment strategies.

Sources: