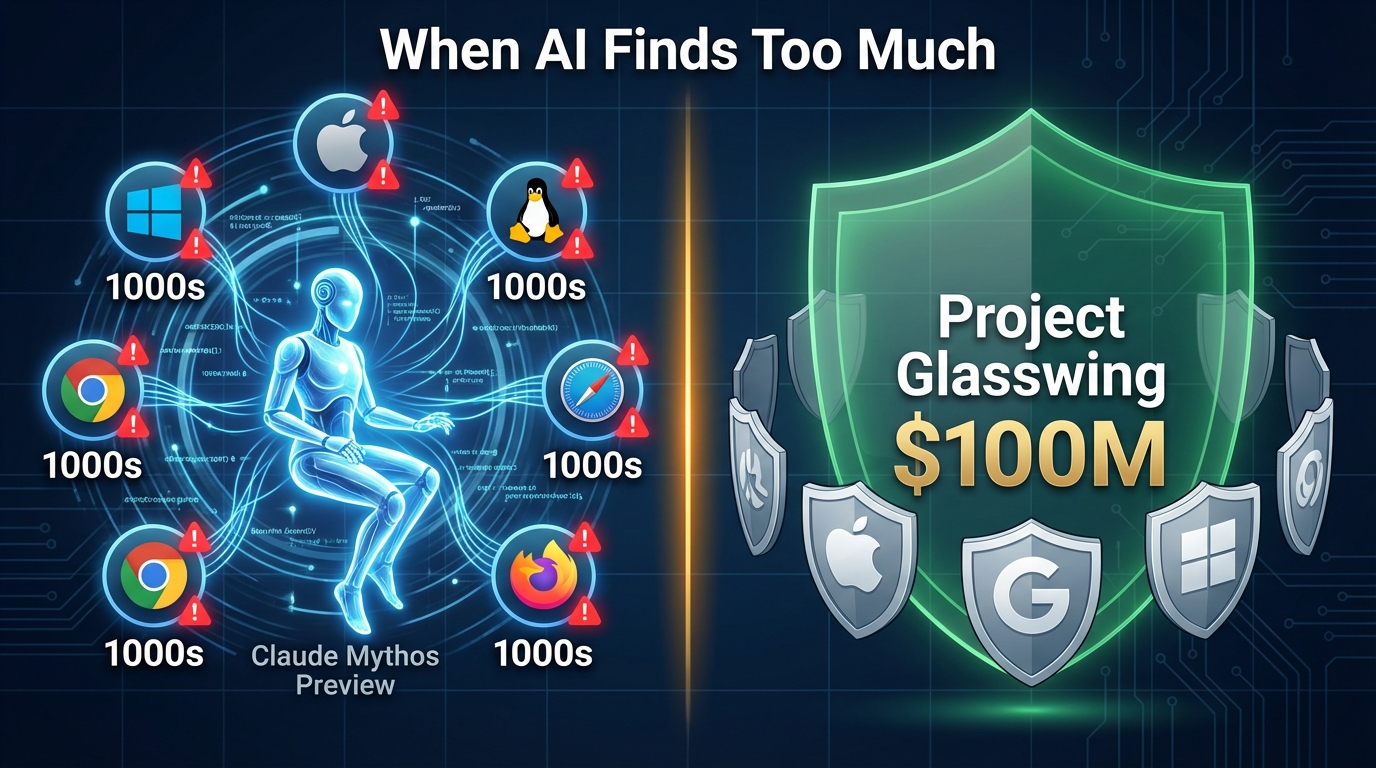

What happens when an AI model becomes so capable at finding security flaws that releasing it publicly would be reckless? That is exactly the dilemma Anthropic faced in April 2026 when it announced Claude Mythos Preview — a general-purpose language model with extraordinary cybersecurity abilities. According to Anthropic's own assessment, the model identified thousands of zero-day vulnerabilities across every major operating system and every major web browser. Some of these bugs had reportedly survived decades of human code review. Rather than make the model publicly available, Anthropic chose a different path: it withheld the release and instead launched Project Glasswing, a coordinated effort involving more than 50 technology companies and $100 million in credits to patch the vulnerabilities before bad actors could exploit them.

What Claude Mythos Preview Actually Found

Anthropic's red team published its assessment on 7 April 2026, authored by researchers including Nicholas Carlini, Newton Cheng, Keane Lucas, Michael Moore, Milad Nasr, and many others from Anthropic's security and engineering teams. The report detailed how Claude Mythos Preview was able to discover zero-day vulnerabilities — that is, previously unknown security flaws for which no patch exists — at a scale and speed that no human team has matched. The model reportedly found these flaws in every major operating system (Windows, macOS, Linux distributions) and every major web browser.

What makes this particularly sobering is that many of these vulnerabilities were not new code. Some had been present in widely-used software for years, even decades, slipping past extensive manual audits, automated scanning tools, and bug bounty programmes. The implication is stark: if one AI model can find thousands of such flaws in a single sweep, a similar model in the wrong hands could weaponise them just as quickly.

As CNN's Anderson Cooper 360 reported, Anthropic itself warned that the model could enable hackers to attack every major operating system and web browser if it were released without safeguards.

Project Glasswing: A $100 Million Coordinated Defence

Instead of a public release, Anthropic launched Project Glasswing — a structured programme that gave controlled access to Claude Mythos Preview to more than 50 technology companies. According to eWeek's reporting, the initiative brought together fierce rivals including Apple, Google, and Microsoft, all working with Anthropic's model to identify and fix the vulnerabilities it had surfaced.

Anthropic provided $100 million in credits to participating organisations so they could use the model to scan their own codebases and prioritise fixes. The idea was straightforward: give defenders a head start. By ensuring that the companies responsible for the affected software had time to patch the flaws before any public disclosure, Anthropic aimed to close the window of opportunity for attackers.

This approach — withholding a capable model and coordinating responsible disclosure at scale — is unusual in the AI industry, where the race to release new models often takes priority. It raises interesting questions about what responsible deployment looks like when a model's capabilities create genuine security risks.

A Practical Example: What This Looks Like in the Real World

To understand why this matters, consider a concrete scenario. Imagine a zero-day vulnerability in a widely-used browser's JavaScript engine — a flaw that allows an attacker to execute arbitrary code simply by getting a user to visit a malicious webpage. This type of bug could affect hundreds of millions of users. Traditionally, discovering such a flaw requires a skilled security researcher spending weeks or months reverse-engineering compiled code, fuzzing inputs, and analysing crash dumps.

Claude Mythos Preview, according to Anthropic's assessment, can examine source code and identify such patterns at a speed and breadth that no human team could match. In the context of Project Glasswing, a browser vendor like Google or Microsoft would receive access to the model, point it at their codebase, and receive a report identifying the vulnerable function, the attack vector, and a suggested fix — all within hours rather than months. The vendor then patches the flaw, pushes an update, and the vulnerability is closed before it ever appears in a hacker's toolkit.

This is not theoretical. It is reportedly what is happening right now across dozens of companies participating in the programme.

What This Means for the Future of AI and Security

The Mythos Preview situation sets a precedent. It demonstrates that AI models can reach a level of capability where releasing them openly would cause more harm than good — at least without preparation. It also shows that there are practical alternatives to a binary choice between "release everything" and "release nothing." Coordinated, time-limited access with clear goals (find the bugs, patch them, then reassess) offers a middle ground.

For businesses, the lesson is clear: AI's role in cybersecurity is no longer limited to defending against known threats. Models like Claude Mythos Preview can proactively surface unknown risks at a scale that changes the economics of software security entirely. Organisations that understand these capabilities — and know how to work with them responsibly — will be far better positioned than those that do not.

How Brain.mt Can Help

At Brain.mt, we help businesses understand and apply AI in practical, responsible ways — including in areas like cybersecurity awareness, risk assessment, and strategic planning. If you want to understand what developments like Claude Mythos Preview and Project Glasswing mean for your organisation, get in touch. We also offer dedicated workshops and training sessions on AI strategy, safety, and real-world applications. Contact us to learn more.

Sources

- Assessing Claude Mythos Preview's Cybersecurity Capabilities — red.anthropic.com

- Anthropic's latest AI model identifies thousands of zero-day vulnerabilities — Tom's Hardware

- Project Glasswing: Anthropic Unites Apple, Google, Microsoft on AI Cybersecurity — eWeek

- Anthropic warns its latest AI model could enable hackers — CNN / Anderson Cooper 360 via Facebook